My experience with software development using natural language: vibe coding

Vibe coding seems to be the new trend in AI. Supposedly, anyone, without programming knowledge, can build an application using prompts. I was curious about what these tools could offer us and decided to test them.

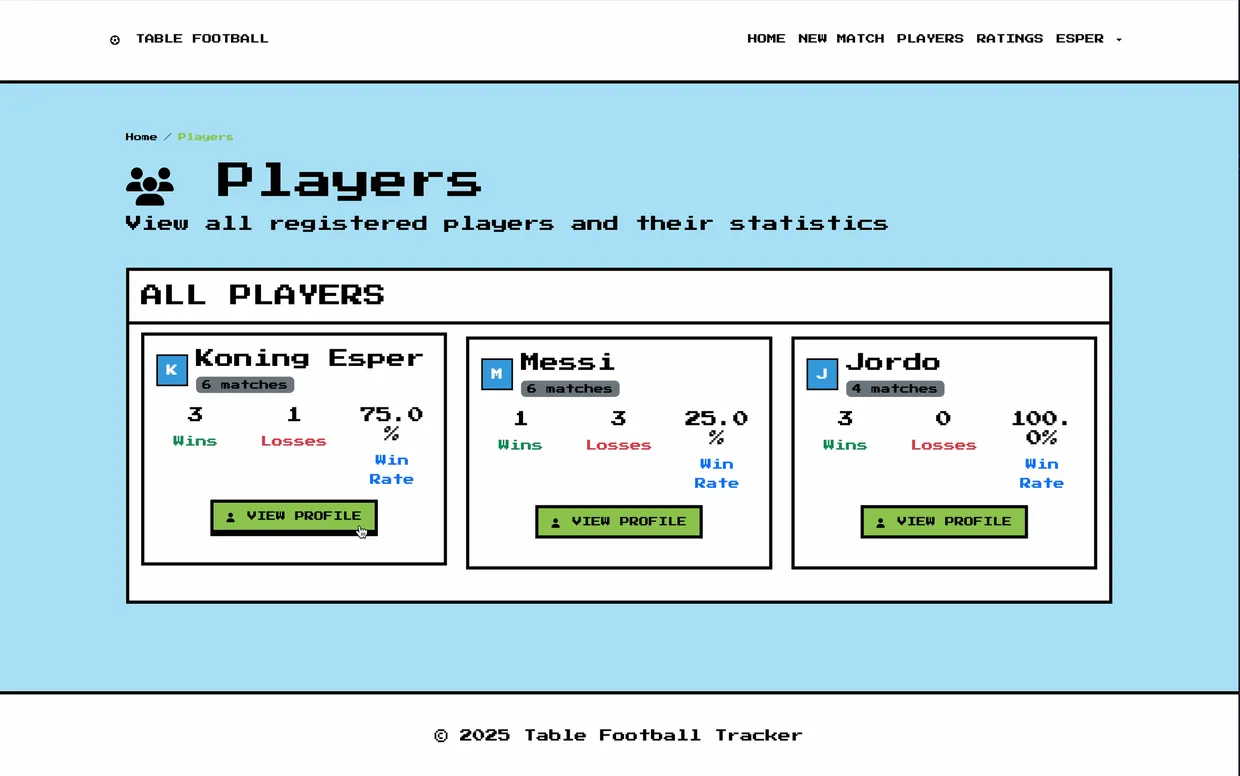

Of course, we use AI in many forms. The most used forms are autocomplete in IDEs, like GitHub Copilot, and chat interfaces. The well-known ChatGPT, Claude, and Gemini regularly appear on my screen and those of my colleagues. I must admit, I once made a spin-off of our pool ratings application: foosball ratings. And I didn't type a single line of code for it. The business logic, like score calculations, was also 'vibe coded' with Windsurf. This was impressive. The tool fixed its own errors, but the result was still not flawless. I didn't work neatly with version control, automated tests and tickets.

So, to put it to the test, I experimented with applying 'vibe coding' in a way that ensures quality: with version control and merge requests, according to our coding conventions, per user story, and with automated tests. We described a project: a platform for organizing lightning talks, like Meetup.com. I did this with two well-known tools, Windsurf and Cursor. There are more tools, such as Roocode and Claude Code; I left these out of the research.

The preliminary research: how do you guide AI models to work in a targeted way?

To make these tools work in a controlled way and according to standards, you set basic rules. For example, define the technology stack (like Django and Bootstrap) and specify that commands must be executed via Docker from a Makefile. Indicate that you prefer small files, want logic divided into Django apps, and expect tests with Pytest and Django Webtest. For major changes, first make a plan together with the LLM. This provides control and the ability to apply your own experience. Instruct the model to only touch relevant code and leave settings or configuration unchanged. Also, include the relevant file names in your prompt, to keep the context small and give the model a better understanding of your prompt.

The execution

I prompted per ticket. Each merge request had to meet the same requirements that we as humans must meet: static types, new automated tests, and an update in the documentation. Developing the models, logic, and frontend went very quickly. It felt chaotic: a lot of code, many errors (which could often be solved by the tool itself, or led to a desired solution with my instructions).

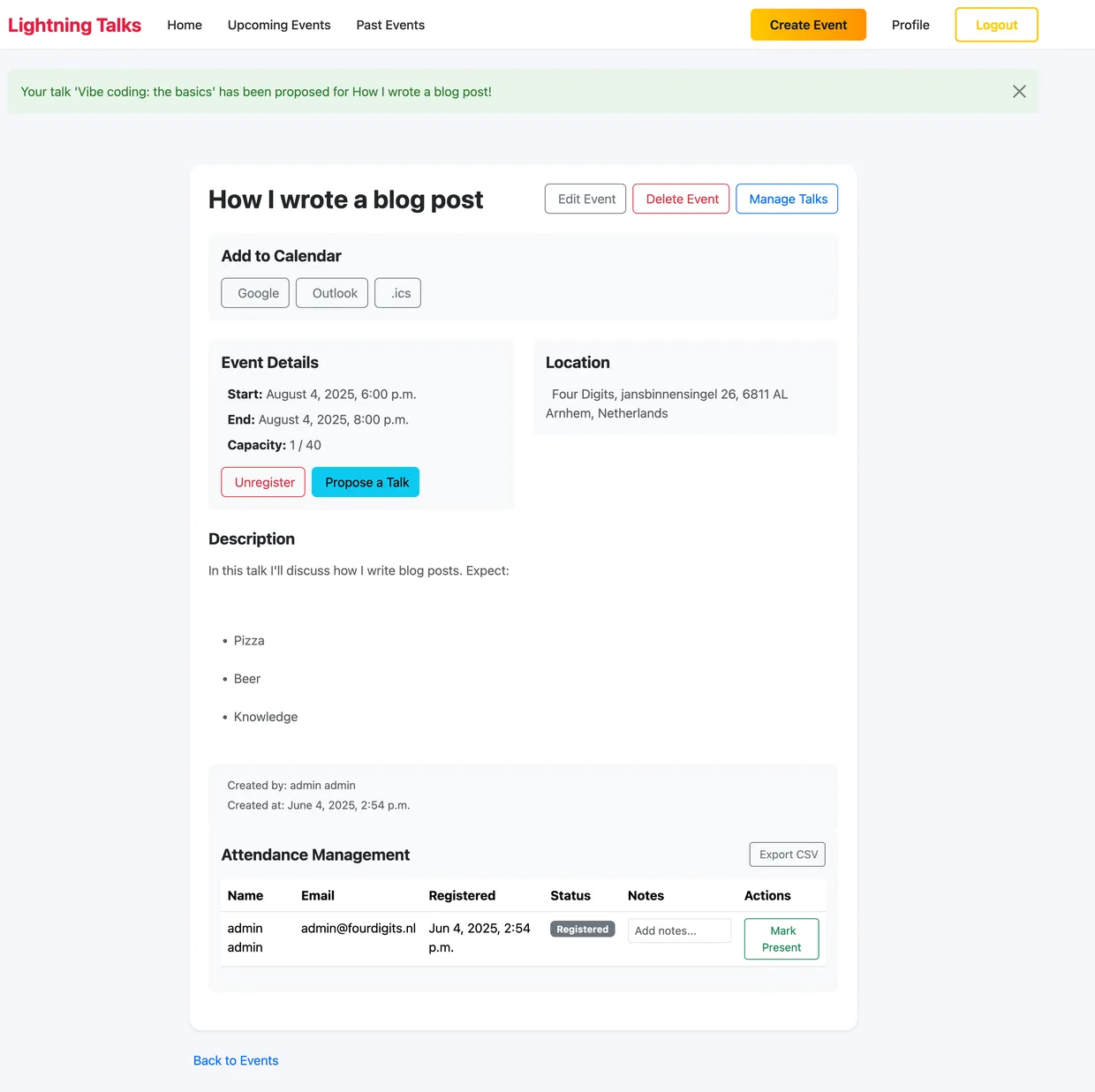

Result In 1 day, I built an application with the following requirements:

- User registration

- Event creation

- Signing up for an event

- Submitting a lightning talk at an event

- Managing lightning talks (email upon registration, approval, rejection, etc.)

- Email confirmation (with asynchronous task)

- Event reminders

- Add to calendar feature supporting .ics, Google and Outlook

- In our cookie-cutter template for easy hosting and consistency

- 90% test coverage

Most of the time is spent on QA: static types, functional review, and automated tests. Where a developer normally spends about 50/50 on typing code and testing, this now shifts to 20/80. But typing standard CRUD views and user registration works excellently with vibe coding. I am very impressed that it takes into account exceptions that people overlook more quickly, such as exceptions and validation rules. It also neatly leaves a to-do for missing functionalities (like a 'forgot password' option in the registration form) and documentation updates (though not always). Moreover, the tool is excellent at applying a simple responsive Bootstrap template. Implementing packages and API connections also goes very well; the tool can read the documentation (tip: use the MCP web server to link to the correct documentation or place the documentation in your project). But that's not all: I saw a major permission error that made registered users visible to everyone. Also, despite instructions, the tool is not good at consistency. It places tests in wrong files, frontend code somewhere else, sometimes suddenly uses Django permissions, and sometimes an if/else in the template.

Conclusion

Vibe coding is not yet advanced enough to develop scalable, high-quality, and secure software. Although it is excellent for quickly creating a lot of simple code, it is less suitable for consistency and the finishing touches. This makes these tools excellent for:

- Prototyping

- Setting up a project

- Small applications (microservices)

- CRUD views

- API integration (when there are high quality docs available)

Where these AI tools can take away repetitive work, supervision by experienced developers with in-depth knowledge of scalable and secure web applications is absolutely necessary. This is already true for a small project like this, but even more so for large projects where the context is far too large for a 'vibe coding' tool to comprehend.

Curious how this approach could work for your organisation? Let’s explore the possibilities. Please don't hesitate to contact us.