My takeaways regarding AI from PyCon Italy in Bologna 2025

I’ve been back in the cold and rainy Netherlands for a few days, still processing all the notes I took at PyCon Italy in Bologna (May 28-31, 2025). Since most projects I work on are written in Django, it was great to see how many people in the Python community know and use it. Even when a talk wasn't about Django, the Django ORM often appeared in examples. What I love about PyCon is the broad range of subjects, from running Python in the browser (PyScript) to prompt engineering with AI. In this post, I'll give an overview of what captured my interest. Spoiler alert: mostly AI related talks.

Bridging the Gap Between Engineers and Data Scientists

Francesco Lucantoni's presentation on the collaboration between software engineers and data scientists was notable. He highlighted their different backgrounds and emphasized the engineer's role in introducing tools like linters and version control to create reproducible experiments, advocating a move away from notebooks. Lucantoni advised a gradual and inclusive approach, even making it enjoyable through gamification. This strongly resonated with my own experiences working on projects with data scientists.

Diving into RAG and Sentence Transformers

Coincidentally, I had just started exploring Retrieval Augmented Generation (RAG) in a personal diary application the weekend before PyCon. RAG is an AI technique where a model retrieves external information to generate more accurate, up-to-date, and context-aware responses. It combines a retriever (to fetch documents) and a generator (to produce natural language output).

At PyCon, several talks allowed me to dive deeper into the underlying technology: sentence transformers. In essence, sentence transformers (like SBERT) are Python modules for using and training embedding models. Paolo Melchiorre, for example, showed how they enable semantic search. A search for "guitar" could also return related entries like "rock" and "music". While we are used to this from Large Language Models, this is a relatively lightweight module that you can install with pip and run locally in Python, with no API call required. It does require a vector database, which can be easily added to PostgreSQL using the PGvector extension, a setup that matches our favorite stack.

Practical Tips for Developing RAG Applications

As an extension to this, I attended a talk by Duarte Carmo that dove into developing functional RAG applications. Here are the key takeaways:

Use Structured Output

Use Pydantic models to structure the output. Include facts, quotes, and reasoning. While this doesn't solve hallucinations, it allows users to verify the response, building trust.

Route User Queries

There are different types of questions. A question like, "In which city was PyCon Italy?" can be answered with a simple keyword search. However, a question like, "Summarize my learnings this year," requires finding related entries and grouping them into themes. A technique called "query routing" can determine the best method. An LLM can act as a router with a prompt like this:

"Decide which filter to use for the user question.

- Keyword filter: Use when the question targets specific topics or terms.

- Representative filter: Use for general questions about trends, summaries, or overall insights.

- Respond only with the filter type.

Examples:

- ‘Summarize my learnings’ → Representative filter

- ‘What are my goals this month?’ → Representative filter

- ‘In which city was PyCon Italy?’ → Keyword filter"

Understand User Queries

We developers often overestimate the quality of user prompts. Users might ask something vague like "is weather italy good". The process of interpreting the user's intent is called "query understanding". This can be improved by using an LLM to expand a simple query into several more specific ones.

An example prompt would be:

"Input: Your role is to act as a prompt expansion assistant for a Retrieval-Augmented Generation (RAG) application. You will be given a user's initial prompt. Your objective is to create three distinct, improved prompts that accurately reflect what the user likely intended to ask. Here is the prompt: is weather Italy good

Output:

- What is the 7-day weather forecast for popular tourist cities in Italy, including Rome, Florence, and Venice?

- What is the typical weather in Italy during the summer months of July and August?

- Which season offers the best weather for sightseeing across Italy, with mild temperatures and minimal rain?"

Duarte also recommended learning the basics of RAG before jumping into complex, full-featured tools. Fundamentally, RAG applications are not that complicated, and a good understanding of the core principles is valuable.

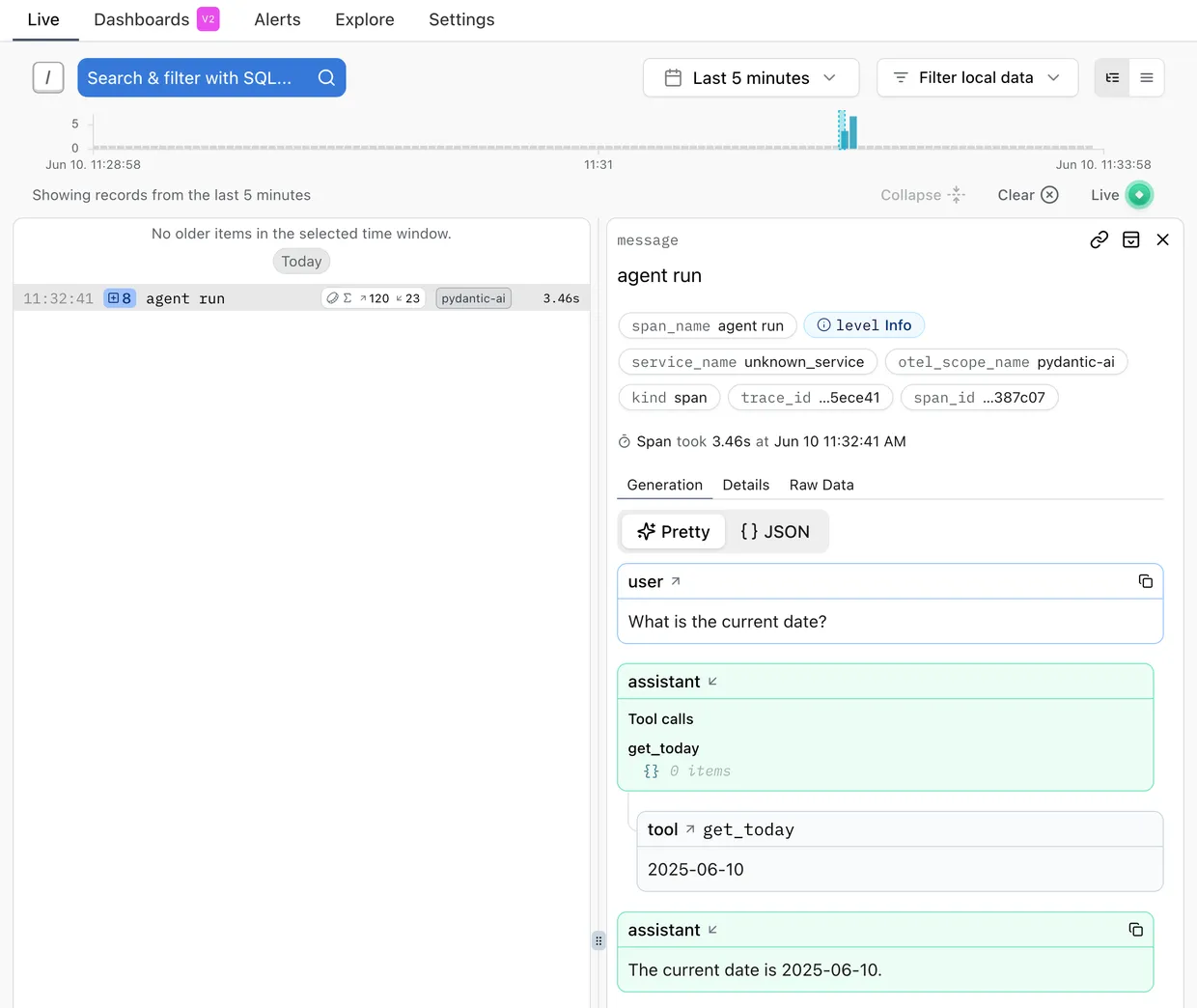

Logging, testing, and debugging with Pydantic AI and Logfire

As mentioned, user prompts can be unpredictable, retrieval can go wrong, and LLMs can hallucinate. Developing a good RAG application is an iterative process, which makes good logging, debugging, and testing essential.

I attended a talk by Marcelo Trylesinski about Pydantic AI, which, combined with Pydantic Logfire, tackles this problem. Pydantic AI provides structured output using models, Logfire logs and traces the steps the LLM took, and Pydantic Evals helps automate testing the output, optionally using another LLM as a judge (LLMJudge).

Putting it into practice and share my learnings with a Lightning Talk

Inspired by previous talks, I created a demo and gave a lightning talk on building an agent with Pydantic AI and Logfire. The code is available on GitHub: https://github.com/Esperk/pydantic-ai-logfire-demo.

We organize lightning talks four times a year. You are welcome to attend or even present a topic yourself. More information is available here: https://www.fourdigits.nl/lightning-talks/.